Pages that’s are blocked by robot.txt file are served as Excluded by ‘noindex’ tag in google search. If you want to index these pages then you need to modify your robot.txt file.

Robot.txt files are used to prevent indexing of unnecessary pages available on your website, like categories, tags, and others pages.

If you haven’t modified your robot.txt then it may start preventing indexing of your post URL. In this case you need to remove or modify your robot.txt file.

How to fix ‘noindex’ tag in google search console.

To fix no index error in (GSC) There are three steps you need to follow.

Regenerate or modify robot.txt : To fix this error you need to create proper robot.txt file. If you don’t know about the modification of robot.txt file then you need to use robot.txt generator. Which can help you to create professional robot.txt that may helps you in ranking.

Use URL inspection tool: Another way to fix robot.txt file in google search console is URL inspection. This tool is provided by google webmaster’s to index any website content manually. To use this tool you need to copy URL and submit it to URL inspection tool and then click request index. It may help you in fixing ‘no index’ tag error.

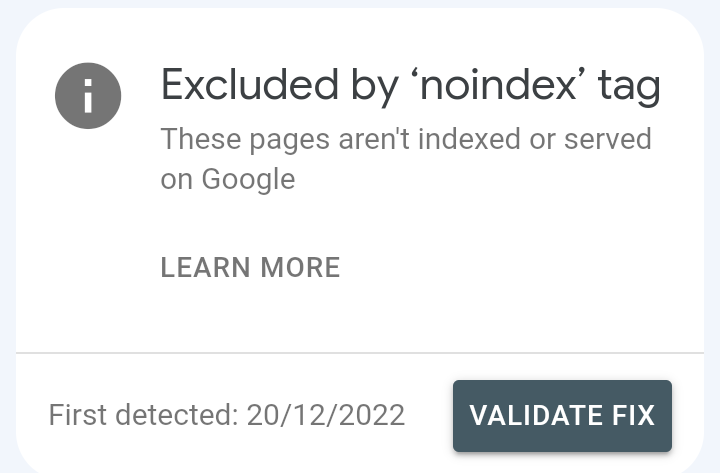

Start validation process: To fix this error you may need to start the validation process. You can start the validation process in search console. Goto google search console find pages and then click on no index error

After clicking then start the validation process. It may help in indexing of all URL excluded by ‘noindex’ tag

It is necessary to fix ‘noindex’ tag?

No, it is not necessary to fix no index tag error in (GSC) because it may prevent indexing of pages that have no any Value in search result. So, indexing of these pages is not necessary.